You Don't Need a Better Chat Multiplexer for AI Agents

Writing code was never the bottleneck. Reviewing it isn't either. Deciding things is.

Atlassian's 2025 developer survey of 3,500 developers and managers across six countries found that developers spend 16% of their time coding. The biggest reported time-wasters are finding information, adapting to new technology, context switching between tools, and collaboration with other teams. AI coding assistants make the 16% faster; the bigger bottlenecks are elsewhere.

When you run one agent, your job is to read what it produces. You look at your terminal, see the diffs flying by, maybe scroll back up and squint a bit. LGTM. When you try to run ten agents, that loop doesn't scale. It transforms into a different job altogether. You're not just reading diffs anymore, you're choosing which diff to read first. Agent three is paused on a tool permissions request. Agent six wants to know if the hardcoded secret it found in config/dev.yaml is a real credential or a stub. Agent two has been staring at you for 20 minutes waiting on a branch name. You have to pick which one to answer, and in what order. And you have to remember which agent is doing what.

If 2025 was the year of the "coding agent CLI", then 2026 is starting to look like the year of "slap a GUI on top of the CLIs from 2025". Over a dozen new multi-agent coding orchestration tools have shipped since the start of the year that I know of. Line up their screenshots and they're the same screenshot, because they're all essentially chat multiplexers. Every one of them answers "how do I see all my agents at once" rather than "which of these agents needs me right now, and why".

The issue is that while these tools enable you to switch between sessions easily, they don't really solve the problem above. It only gets worse once your agents start delegating tasks to subagents. In the multi-agent-builder world, your job isn't coder or reviewer, it's decider. And your tooling should play a role in that by intelligently surfacing the things that need your attention, rather than just spewing content hoping you catch it.

You don't need a better chat multiplexer, you need a decision queue. One fed by trees of agents, where only what they can't resolve reaches you. I've been trying to figure out what it should look like.

Here's where it's at right now.

AgentBeacon ops view: six running agents, three with questions.

This problem is not new, it shows up whenever someone delegates tasks and is responsible for outcomes. Organizations hit this trap long before AI agents existed, and they converged, across industries and centuries, on a specific response. Put a layer between the principal and the noise. Let that layer frame questions, surface options, and resolve whatever can be resolved at a lower level. Send upward only what cannot be settled below, and send it in a shape the principal can decide from quickly. The layer has different names in different organizations and the artifact it produces has different names too, but the core idea is the same.

Jeff Bezos described one Amazon ritual built around this instinct in his 2017 shareholder letter: "We don't do PowerPoint (or any other slide-oriented) presentations at Amazon. Instead, we write narratively structured six-page memos. We silently read one at the beginning of each meeting in a kind of 'study hall.'" The letter is about high standards in memo writing, but the organizational instinct underneath it is the part that matters here. Do the synthesis before the meeting. Narrow the options before the room. Spend the principal's time on what is still unresolved, not on raw material that should have been processed lower down. A chief of staff plays a similar role: deciding what reaches the principal. The role exists across organizations because the problem it solves is not optional. A dedicated full-time job for attention triage is the proof that attention triage is a first-class organizational problem.

The military wrote the procedure down. The US Army's current FM 6-0 says decision briefings vary with the commander's familiarity with the subject. When the subject is familiar, the format often resembles a decision paper: problem statement, essential background, impacts, and a recommended solution. The briefing does the synthesis, the framing, and the tradeoff analysis upstream. When the decision lands on the person with the authority to make it, it lands in a shape that respects their time.

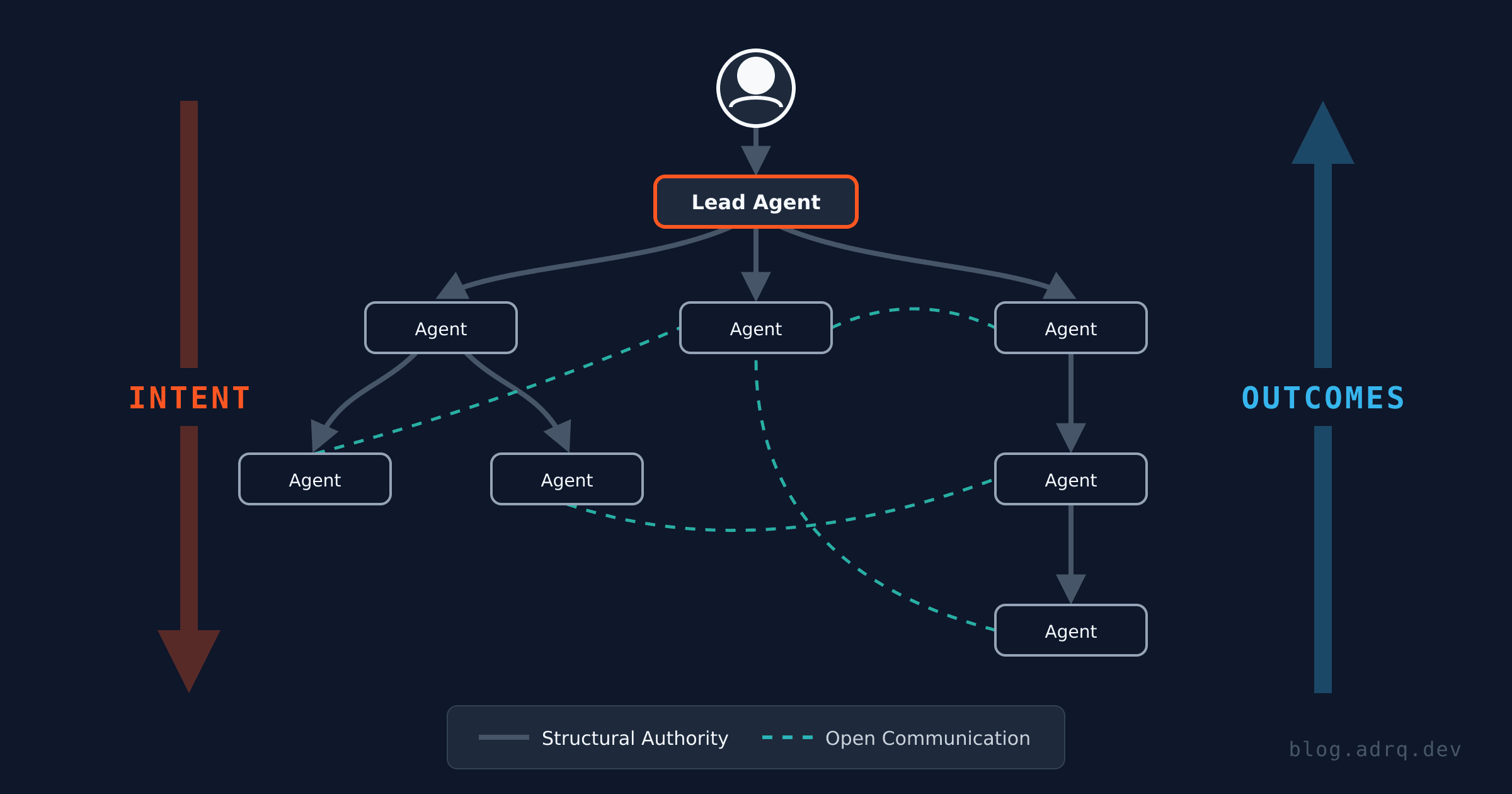

My last post argued that agents need an authority tree, each parent owning its children, absorbing their complexity, and only escalating genuine ambiguity upward. A natural result of that is a decision queue at the top. Only when something is actually ambiguous, product decisions, user-facing scope, an architecture tradeoff, a security policy call, does the question reach you. And when one of those reaches you, it arrives shaped like a decision briefing. Context, structured options, and a recommendation.

A fair critique of multi-agent coding I keep seeing is that running a fleet of agents in parallel feels like progress but usually isn't; the motion masks the absence of results. That is correct for unstructured parallelism. Watching terminals and chat windows scroll is motion, not work. But when you wrap that parallelism under a structure that filters escalations into a single queue, you suddenly have leverage. At that point your job is not watching motion. It is answering the handful of questions the team could not answer on its own, which is what you were supposed to be doing all along. Parallelism then becomes a way of getting more decisions to the place where decisions actually happen.

The ops view above is live code. AgentBeacon is open source under Apache 2.0 if you want to try it. It's early, so expect papercuts.