Multi-Agent Coordination Primitives

How many coordination primitives does your multi-agent framework need? The field is converging on a shared goal of effective multi-agent coordination but seems unsure about the mechanisms to get there.

There is a pervasive sense of re-invention and confusion between scaffolding tools and real coordination primitives. The coordination surface of current AI agent frameworks ranges from a handful of tools to over a dozen operations, without clear agreement on the fundamental operations that multi-agent coordination systems need. Where do you draw the line?

See for example:

- Claude Code Agent Teams (Anthropic, experimental): 13+ operations —

spawnTeam,cleanup,write,broadcast,approvePlan,rejectPlan,requestShutdown,approveShutdown, task management (TaskCreate,TaskUpdate,TaskList,TaskGet), shared task boards, file-based mailboxes, dependency tracking, plan approval workflows. - Codex CLI (OpenAI, experimental):

spawn_agent,send_input,wait_agent,close_agent,resume_agent,spawn_agents_on_csv(hierarchical parent-child, configurable depth, no peer messaging). - Gas Town (by Steve Yegge): role-based hierarchy (Mayor, Polecats, Refinery, Witness, Deacon, Crew), Beads (Git-backed work tracking), Hooks (work queues), GUPP, Sweeps, Agent Mail integration.

- CrewAI: delegation tools (Delegate work to coworker, Ask question to coworker), hierarchical process with manager agent, sequential process, Flows with

@routerconditional routing, role/goal/backstory agent definitions. - LangGraph: edges, conditional routing via

add_conditional_edges(), state with reducers, checkpointing,Sendfor dynamic fan-out,Commandfor programmatic control. - Google ADK:

SequentialAgent,ParallelAgent,LoopAgent(workflow agents),AgentTool,transfer_to_agent(), shared session state.

TLDR: You only need two coordination primitives. The rest is either communication (let the model handle it) or human interface (a separate concern).

Computer Science meets Organization Theory

Both computer science and organizational design theory have studied coordination for decades — different vocabularies, different concerns, but overlapping conclusions. The recurring patterns across both traditions converge on the following 11 primitives.

| Primitive | What it is |

|---|---|

| TELL | Assert information to another agent Actor model send; Mintzberg informal communication → Agent Teams message, broadcast; Codex send_input; LangGraph shared state with add_messages reducer |

| ASK | Request information or action FIPA request; CSP input→ CrewAI Ask question to coworker; ADK AgentTool (wraps agent as callable tool) |

| CREATE | Spawn a child, establish authority Actor model create; Unix fork()→ Agent Teams spawnTeam; Codex spawn_agent; Gas Town Mayor dispatches work |

| DESTROY | Terminate a child and subtree Erlang supervision trees terminate_child; Unix kill()→ Agent Teams requestShutdown; Codex close_agent |

| CLAIM | Atomically acquire exclusive access Linda in→ Agent Teams task claiming (file-locked). Rare in practice — see below. |

| SYNC | Block until agents converge CSP parallel composition → Codex wait_agent; LangGraph Send fan-out + superstep convergence |

| ESCALATE | Transfer a decision upward Management by exception; military C2 → Agent Teams plan approval requests (teammate submits to lead); LangGraph interrupt() (pauses execution, surfaces to human/parent) |

| NEGOTIATE | Iterative exchange to reach agreement Contract Net Protocol bidding; org theory lateral negotiation → No mainstream framework implements negotiation in the Contract Net sense |

| OBSERVE | Monitor for state changes Linda rd; pub-sub pattern→ Agent Teams shared task boards; ADK shared session state (read-on-demand); LangGraph state channels (nodes read state written by others) |

| SCOPE | Define a communication boundary CSP hiding; MOISE+ structural dimension → Agent Teams file-based mailboxes; Gas Town Rigs; LangGraph subgraphs (own state schema); CrewAI crew boundaries (agents scoped to a crew) |

| REPORT | Send aggregated status upward Contract Net Protocol report phase → Agent Teams task status updates; Codex parent-child result return |

This list probably isn't perfect. Some of these may collapse into each other, others may be missing. But it gives us something to work with. The real engineering question is: how many of these actually need to be built out as infrastructure?

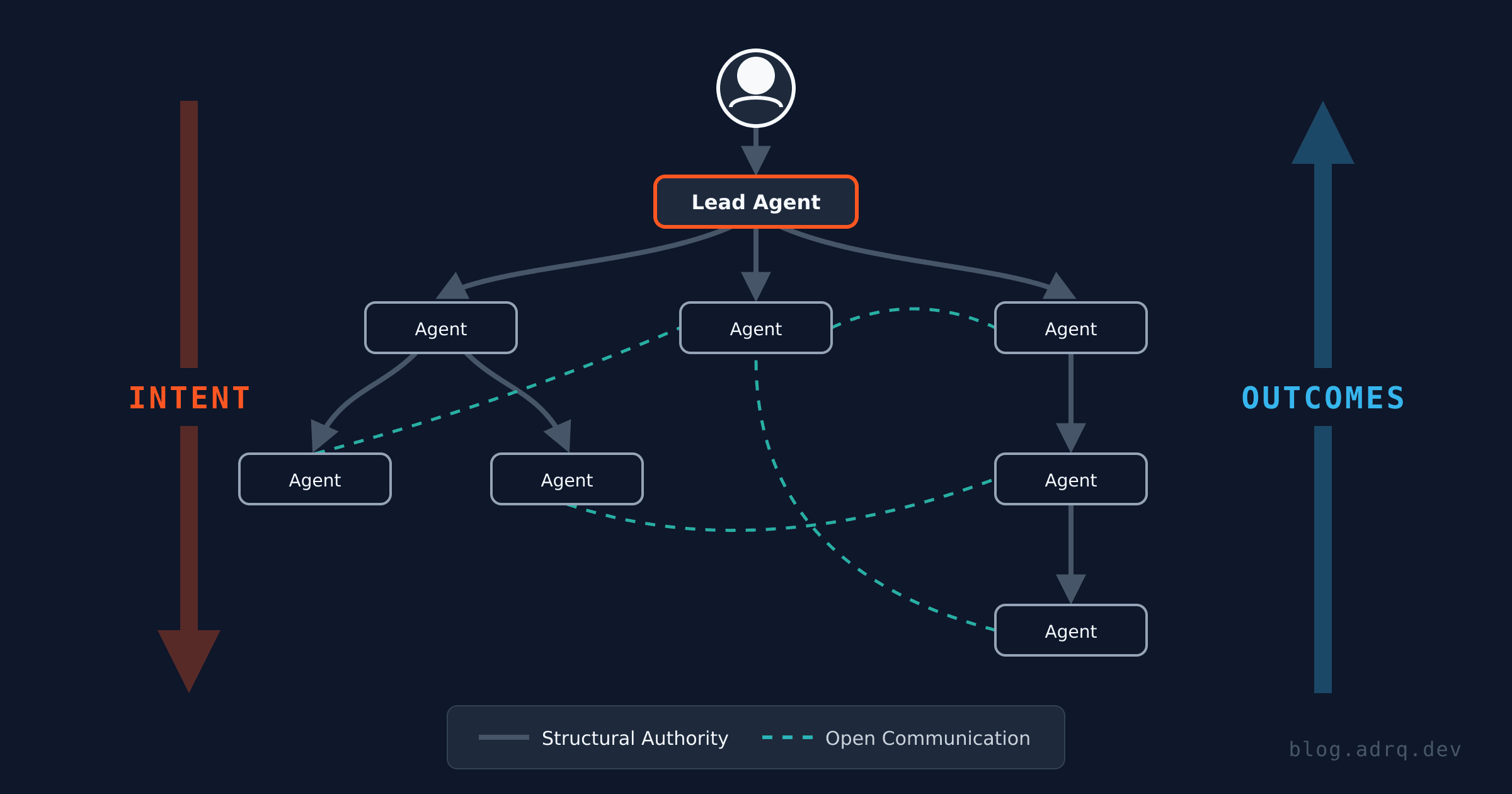

Taking inspiration from Richard Sutton's "bitter lesson", which observes that hand-engineered approaches in AI tend to lose to general methods as computation scales, we can apply a similar lens to agent coordination infrastructure. Some teams are already finding that removing tools sometimes improves performance, not degrades it. We could take this to the extreme and say "no infrastructure is needed", "swarms of autonomous agents will solve everything", but there is no historical precedent for this ever working at scale. I'm talking about undirected, non-hierarchical swarms of entities, building a complex system, and maintaining it over time. Even ant colonies, the canonical example of leaderless coordination, only produce repetitive/stereotypical output, honed by millions of years of evolution. Building complex systems requires authority structures: Linux has a BDFL, Wikipedia has admins, you (probably) have a boss.

I will explore this in more detail in a future post, but to answer the question from earlier we can use a simple heuristic to categorize our coordination primitives. Each primitive above can be categorized as one of:

- requires infrastructure enforcement

- the model can handle it through conversation

Out of all the primitives above, only two require external enforcement in an organizational setting: CREATE and DESTROY. The rest can all be reduced to communication when you really stop to think about it. If an agent sends a bad message, it can just correct in the next one. Two agents can negotiate a contract using natural language (whether they adhere to the contract is a separate story, but that applies to all systems where autonomous entities interact). Even synchronization and exclusive access (SYNC, CLAIM), which sound like they might need formal mechanisms, are things that high-functioning teams handle through conversation every day: "I'm working on the auth module, don't touch it" or "let me know when you're done." Think of a well-run Zoom stand up, you don't need to fight over the unmute button, you just talk. But you can't "talk" another team member into existence.

CREATE needs infrastructure because it implies resource allocation. In a human org, if you want to add someone to your team, you need to go through the proper channels for recruiting/HR/onboarding/budgeting etc. In a human organization "spawn" means "add to organizational structure" rather than literal "spawn from nothing". In the AI agents world this means "add to coordination unit". Whether the agent is pre-existing (ie. a human being brought in as an employee) or new-to-this-world (fresh AI Agent process), we care about its lifecycle as a part of the organization/coordination unit. For AI agents, spawning a new one means allocating compute, worktrees, auth, process ownership, potentially gigabytes of memory (looking at you Claude Code). A model can make this request, just like you can ask HR to post a job opening, but the organizational infra and process needs to actually set it in motion. "But at my last company we just hired a person on the spot off the street and it worked out great." How big was that company? Two people working in a coffee shop? Non-structured spawning doesn't scale.

DESTROY is less obvious. The first instinct might be to think this can just be handled in conversation, and to some extent it can. An agent can send a message to a child agent asking it to shut down, and you can tell your employee to stop what they are doing or fire them. But where conversation breaks down is enforceability. You might have a rogue agent going off script, or an employee who refuses to leave. Human organizations have developed processes and systems to deal with this: cancel ID badge, lock out of company accounts, escort them out of the building. AI agents need similar infrastructure and systems in place to enforce the organizational lifecycle of an entity. If an agent hangs, gets stuck in a loop, or ignores the parent instructions then we need a reliable way to terminate the process.

You may have noticed that the DESTROY primitive appears to be mostly sourced from real engineering implementations. Most theoretical models assume that processes are self-terminating. Process calculi formalized self-completion ie. a process that finishes and becomes inert. The real world is a lot messier. Agent sessions don't always finish, they get stuck in loops, they stop responding. The parent needs a mechanism that works whether or not the child cooperates, for the same reason that a company needs HR procedures for termination and not just a polite message. Erlang's supervision trees and Unix signals both arrived at the same answer: the entity that creates must be able to destroy.

"But if my agent has a Bash tool it doesn't need any infra." Sure, a model with terminal access can technically do anything, including claude -p 'go rogue'. But "technically can" isn't the same as "organizationally should." That's the agent equivalent of you hiring your friend off the street, giving them a desk, no paperwork, and hoping it works out. Maybe some work gets done, but you can imagine how this ends. When things go wrong, there's no audit trail of who spawned what, no resource management, no authority hierarchy, and no guarantee that "fired" actually means fired. Maybe they handed their badge to someone else on the way out. CREATE and DESTROY need to be infrastructure because the organization needs to know what's running, what it costs, and who's responsible for each running entity.

Two Is All You Need

If you go back to the framework list from the opening and run each tool through this filter, the same pattern shows up every time. spawnTeam and requestShutdown. spawn_agent and close_agent. fork() and kill(). Everything else, the messaging, the broadcasting, the task boards, the approval workflows and so on is just communication disguised as infrastructure.

Teams are finding that having fewer tools generally improves agent performance. Vercel removed 80% of their agent's tools and saw a 3.5x speedup, Cloudflare found that agents handle complex APIs better when they write code instead of calling tools directly, and Anthropic measured a 98.7% token reduction when agents have freedom to explore their tools vs. loading all upfront. If this holds for API tools, it applies even more to coordination tools, where each additional primitive is another point of failure in a multi-agent chain.

I have a strong suspicion that frameworks will converge on thinner coordination surfaces over the next few model generations. Infrastructure for authority: who gets created, who gets terminated, and who decides. Conversation for everything else. If this is wrong, adding a tool later is easy. If it's right, frameworks that built tools for negotiation, synchronization, and claiming are carrying dead weight, and that dead weight compounds. Google Research found that independent multi-agent systems amplify errors 17.2x compared to single-agent baselines. Each additional coordination step in a chain eats into your end-to-end reliability.

This is a bet on the trajectory of model improvement. Models will keep getting better at communication, but just as in human organizations, the create/destroy, hire/fire operations need to be regulated and enforceable.

In subsequent posts I will show what a system built on these principles looks like in practice, starting with the problem none of these primitives solve: what happens when an organization of agents needs human input?

Further reading

- Kim et al., Towards a Science of Scaling Agent Systems (Google Research, 2025) — the 17.2x error amplification study

- Cemri et al., Why Do Multi-Agent LLM Systems Fail? (2025) — failure taxonomy across 1,642 execution traces

- Joe Armstrong, Making reliable distributed systems in the presence of software errors (2003) — the Erlang/OTP supervision thesis